How Ormah Works

Ormah Overview

Content verified · 2026-04-12

Ormah is the collective, self-maintaining memory layer all your agents can tap into.

The core idea is simple: memory should be involuntary. Your agents should not have to remember to remember. Ormah works in the background, learning preferences, decisions, patterns, mistakes, and ongoing work, then whispering the right memory at the right time.

Your memory has always been yours. Ormah helps keep it that way.

Local. Private. Portable. Yours to keep. Yours to move.

At a high level, Ormah is a local-first, proactive long-term memory system for agents. It is designed to feel more like memory than search: relevant context, preferences, and constraints can surface before the next prompt is processed, and that shared memory layer gives multiple agents a common substrate they can build on. Markdown node files are the durable source of truth, SQLite and vector indexes are derived state, FastAPI is the operational center, and whisper plus maintenance logic sit on top of that core.

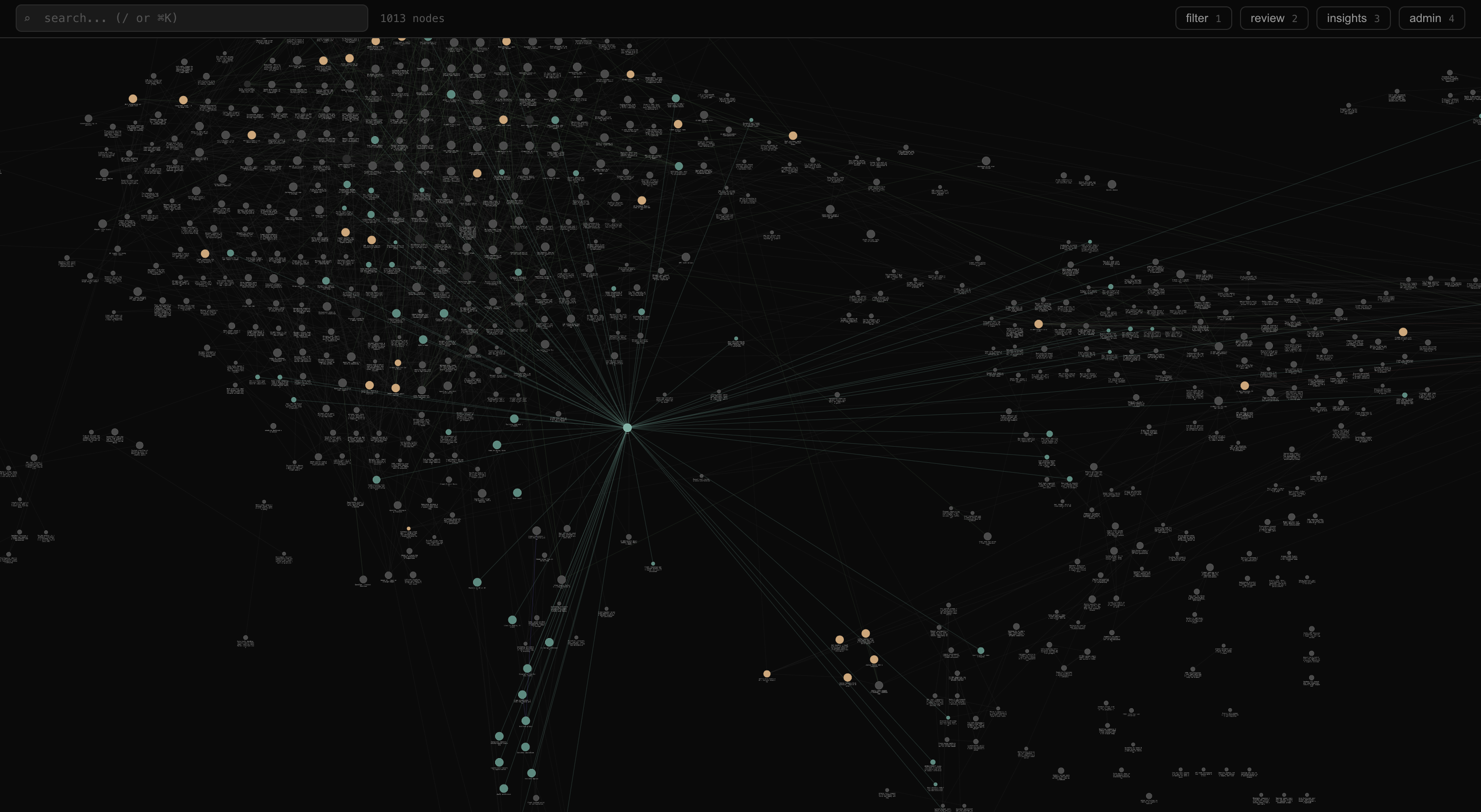

A constellation of ormahs

System Shape

flowchart LR

subgraph Clients

CLAUDE[ Claude Code ]

CODEX[ Codex ]

CLI[ ormah CLI ]

WEB[ Web UI ]

OTHER[ Other agent ]

end

subgraph Adapters

MCP[ MCP adapter<br/>stdio -> HTTP ]

CLIA[ CLI adapter<br/>sync HTTP ]

OAIA[ OpenAI adapter<br/>tool export ]

end

subgraph Server[" FastAPI server (:8787) "]

AGENT[/ agent routes /]

ADMIN[/ admin routes /]

INGEST[/ ingest routes /]

UI[/ ui routes /]

end

subgraph Core

ENGINE[ Memory Engine ]

CONTEXT[ Context Builder ]

SEARCH[ Hybrid Search ]

BUILDER[ Index Builder ]

GRAPH[ Graph Index ]

STORE[ File Store ]

end

subgraph Data

MD[ nodes/*.md ]

SQLITE[( SQLite + FTS5 + sqlite-vec )]

end

subgraph Background

SCHED[ APScheduler ]

HIPPO[ Hippo ]

SESS[ Session watcher ]

end

CLAUDE --> MCP

CODEX --> MCP

CLI --> CLIA

WEB --> UI

OTHER -. uses tool schemas .-> OAIA

MCP --> AGENT

CLIA --> AGENT

CLIA --> ADMIN

AGENT --> ENGINE

ADMIN --> ENGINE

INGEST --> ENGINE

UI --> ENGINE

ENGINE --> CONTEXT

ENGINE --> SEARCH

ENGINE --> BUILDER

ENGINE --> GRAPH

ENGINE --> STORE

SEARCH --> SQLITE

BUILDER --> SQLITE

BUILDER --> MD

STORE --> MD

SCHED --> ENGINE

HIPPO --> ENGINE

SESS --> ENGINE

How to Read This Diagram

- Clients reach Ormah through hooks, MCP, the CLI, the HTTP API, or the web UI.

- Adapters translate client-specific interactions into a small set of server routes.

- The FastAPI app is the runtime center. It wires the

MemoryEngine, background jobs, and watchers together insrc/ormah/main.py. - Core components handle persistence, indexing, graph traversal, whisper building, and search.

- Markdown files are durable state. SQLite, FTS, and vector search are rebuildable derived indexes.

Core System Assumptions

- Local-first: memory data lives on the local machine.

- Markdown is the source of truth: node files are durable; SQLite and vector indexes are rebuildable derivatives.

- Recall and whisper are the two main retrieval paths: recall is deliberate memory lookup, while whisper surfaces relevant memory before the agent responds.

- Maintenance is split across runtime paths: some work happens inline on writes, while background jobs and optional agent-backed maintenance clean up the graph over time.

- The core is agent-agnostic: adapters and hooks can change without changing the storage and retrieval model.

Runtime Boundaries

- FastAPI is the operational center. The app starts a

MemoryEngine, background scheduler, hippocampus watchers, and the session watcher insrc/ormah/main.py. - MemoryEngine is the main facade. Routes delegate almost everything to it in

src/ormah/engine/memory_engine.py. - ContextBuilder implements whisper selection and formatting in

src/ormah/engine/context_builder.py. - FileStore reads and writes markdown node files in

src/ormah/store. - IndexBuilder keeps the derived SQLite and vector index synchronized for Ormah-managed writes and rebuilds in

src/ormah/index/builder.py. - GraphIndex exposes graph traversal, FTS search, and edge retrieval on top of SQLite.

Startup and Shutdown

At startup the app:

- creates

MemoryEngine - calls

engine.startup() - starts APScheduler background jobs

- starts hippocampus watchers if enabled and watch dirs are configured

- starts the session watcher if enabled

At shutdown it stops the session watcher, stops hippocampus observers, shuts down the scheduler, and closes engine resources.

Storage Model

- Markdown node files are the durable source of truth.

- SQLite stores nodes, edges, tags, audit tables, whisper logs, affinity rows, proposals, merge history, and vector search state.

- Ormah-managed writes update both markdown and the derived index immediately.

- A standalone node-file watcher utility exists in

src/ormah/store/watcher.py, but it is not wired into app startup today.

Three Core Paths

This page keeps the runtime flows short. The linked docs go deeper into retrieval, ranking, storage, and maintenance behavior.

Write Path

- A client calls

/agent/remember. MemoryEngine.remember()writes a markdown node.- The node is indexed into SQLite and vector search.

- Inline auto-linking may create initial edges.

- The API returns formatted text plus the new node id.

Read more: Data Model, Storage Layer

Whisper Path

- A supported client hook runs

ormah whisper inject. - The CLI adapter posts to

/agent/whisper. - The route builds a session-aware recent-prompt buffer.

MemoryEngine.get_whisper_context()delegates toContextBuilder.build_whisper_context().- Whisper searches, reranks, applies affinity and gating, formats the result, and may append

maintenance_due.

Claude Code and Codex both install this hook path today.

Read more: Search and Ranking, Whisper, Affinity and Feedback

Maintenance Path

The scheduler runs these background jobs from src/ormah/background/scheduler.py:

importance_scorerindex_updaterduplicate_mergerconflict_detectorauto_linkerauto_clusterconsolidatordecay_manager

Separately, agent-backed maintenance can use /agent/maintenance for a two-phase human-or-agent-in-the-loop workflow.

Read more: Self-Healing

Subsystem Map

src/ormah/

├── adapters/ External interfaces: MCP, CLI, OpenAI schemas, space detection

├── api/ FastAPI routes and middleware

├── background/ Scheduler jobs, hippocampus, session watcher, LLM-backed maintenance logic

├── embeddings/ Encoders, vector store, hybrid search, reranker

├── engine/ MemoryEngine, whisper context builder, prompt classifier, affinity

├── index/ SQLite schema, graph helpers, index builder

├── models/ Pydantic request / domain models

├── store/ Markdown persistence and serialization

├── transcript/ Claude Code and Codex JSONL transcript parsing

├── cli.py Unified end-user CLI

├── config.py Environment-driven settings

├── main.py FastAPI app + lifespan

├── server_manager.py

└── setup.py

Use this as a contributor map, not a replacement for the deeper subsystem docs.

Dive Deeper By Topic

- Persistence and node shape: Data Model, Storage Layer

- Retrieval and whisper behavior: Search and Ranking, Whisper

- Maintenance and graph health: Self-Healing, Affinity and Feedback

- Integration surfaces: MCP and Adapters, API Surface

- Ingestion and watchers: Hippocampus and Session Watcher